Why I’m Building Local AI Companions, Not another Chatbot

Most AI products we use today have two things in common.

They run in the cloud.

And they live inside a chat window.

That is not a bad thing by itself. Quite the opposite. The large models from OpenAI, Anthropic, Google, DeepSeek, and others are impressive. They can write, explain, code, summarize, plan, and act in the digital world in ways that would have felt unimaginable five years ago.

We use these systems every day and throw all kinds of information into them. Often without really questioning what happens to that data, or what could happen to it in the future.

Most of the time, the problem is not a single question we ask in between tasks, or one isolated prompt. The problem is what starts to emerge from all of it.

The way I ask. What I am working on. The problems I keep running into. How I think. What I plan. What I try. Personal problems and behavioral patterns.

If you talk to an AI system long enough, something like a digital shadow starts to form. Not a perfect twin, but an increasingly detailed picture of who you are, what you care about, and how you make decisions.

In my opinion, it is genuinely fascinating that we can now talk to a computer at this level. But it is also uncomfortable, because we do not really know what will be done with our data and what it could be used for later.

I am a huge fan of personal AI assistants that understand me better over time, learn how I work, remember context, and so on. But I do not like who gets to hold that context. I do not like that this personal context automatically belongs to a cloud provider and can potentially be used by them.

That is why I have been interested in local LLMs for quite a while.

Local models are not always as strong as the best cloud models yet. They need the right hardware. Sometimes they are slow. Sometimes they are stubborn and need some tuning. But with a bit of work, you can already build really cool things with them.

Still, they have one decisive advantage:

They run on your machine.

Your conversations do not automatically leave your computer. Your ideas, experiments, personal notes, and workflows do not have to travel through someone else’s server before they become useful.

I do not think local AI is cooler just because it is more technically nerdy. I think it is essential because personal AI should actually belong to us and serve us. Privacy and local AI are topics I will keep coming back to.

But privacy is not everything.

If we are honest, the chat window is also a very limited interface. It is practical. It is fast. It is familiar. You can put a lot of dense information into it. But it does not really feel alive.

You write text into a field. A model writes text back. Maybe there are a few buttons, a few threads, maybe a nice UI. But in the end, it usually remains a textbox.

I think there are more interesting interfaces for personal AI. Especially because AI needs more presence if it is going to become part of our everyday lives. Not just a ghost living in a chatbox, or a voice mumbling out of a speaker.

At the beginning, this does not have to mean a robot body, a hologram, or some complicated future vision.

For the start, a form is enough. A face. A voice. A personality.

Something that shows me: this AI is thinking right now. It is speaking. It is waiting. It is using a tool. It is not just a stream of text, but a small digital actor in my personal virtual space, and maybe one day in a real one.

This is where the idea of AI companions begins for me.

A good AI companion is an interface for a personal local large language model. It makes something feel alive that would otherwise remain lifeless. It gives a local LLM a role, a context, and a sense of presence. It can talk to me, react, use tools, and become part of a small digital environment that belongs to me.

That is why I am building Quanty AI.

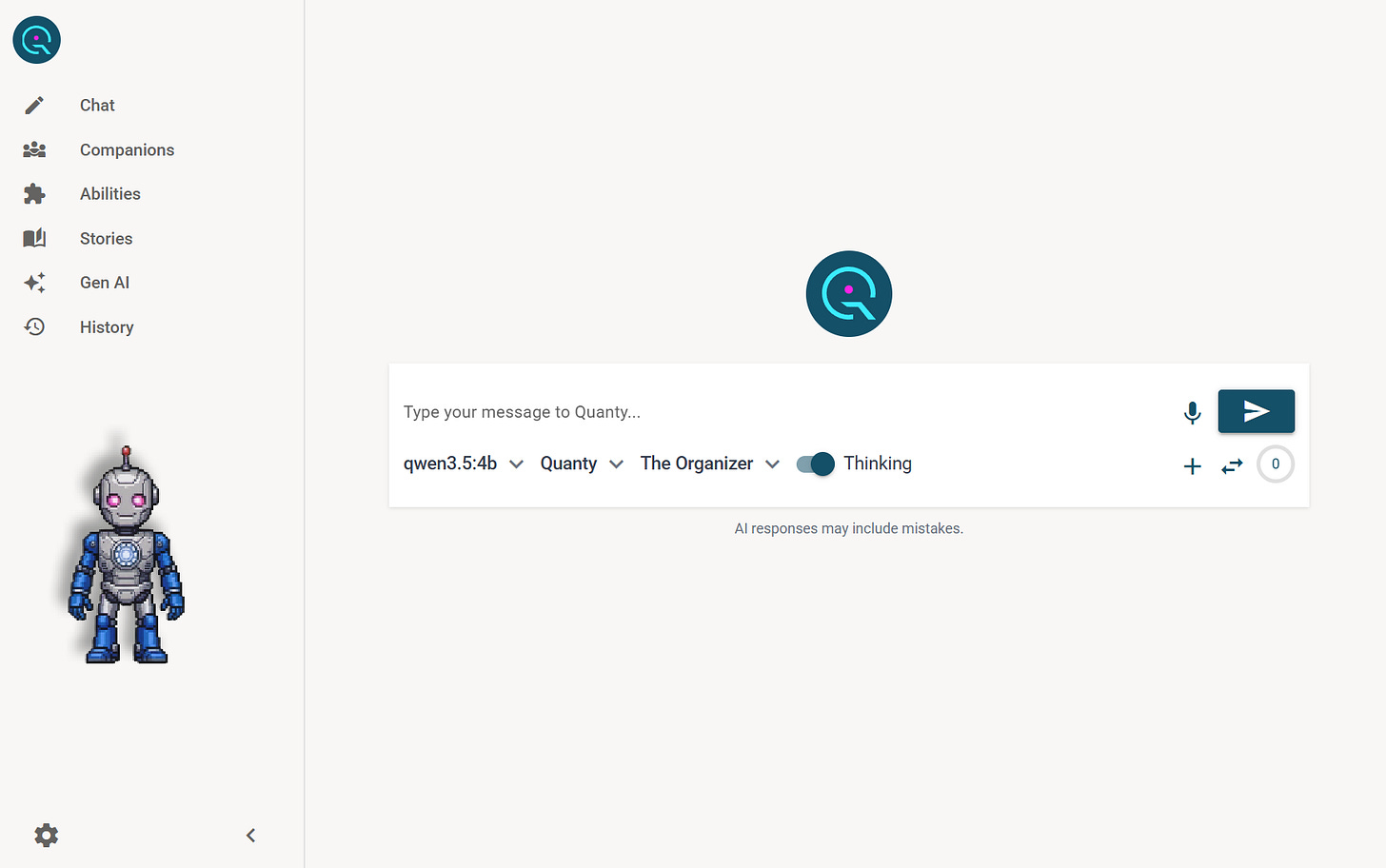

Quanty AI is my attempt to take local AI out of the chat window and give it a home.

It is a local AI companion playground for Windows and macOS. At its core, the reasoning runs locally through Ollama. On top of that, users can create animated pixel-art companions, give them personalities, assign roles, and equip them with skills.

I want to give them a place where a model does not just answer, but feels present. A place where your companion does not only have to be productive, but can also be creative, playful, and personal.

For Quanty AI, I am increasingly trying to establish the motto:

Presence over Productivity.

For me, a big part of the magic is that local models can eventually become small companions. Not perfect. Not all-knowing. But close enough to become part of our digital everyday life.

The following is the direction I am currently going:

Virtual local AI companions that live on your computer. Companions that can not only get things done through skills, but can also become creative companions that are fun to spend time with. Not only for everyday life, but also inside virtual worlds and stories.

If you like take first step into the Quantyverse.

P.S.: I’m building Quanty AI, a local AI companion playground for Windows and macOS.

If you want to follow the build, subscribe here on Substack.

Best,

Thomas